Stop Over-Crediting Last Click: Practical Incrementality Tests

Learn how incrementality testing reveals the real impact of marketing channels and prevents over-crediting last-click conversions.

If you’re a marketing leader or performance team that feels stuck optimizing campaigns based on the number of attributed conversions reported in dashboards, you’re not alone.

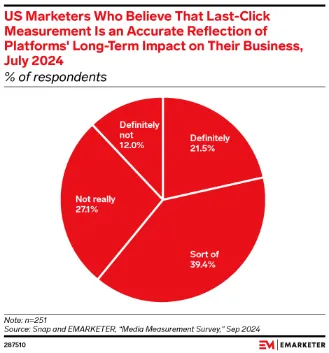

Roughly 22% of organizations still rely exclusively on last-click attribution. And 38 % of marketers say attribution is their #1 analytics challenge. Yet only about 31 % feel confident in their current models.

What’s worse, last-click credit can make mid- and upper-funnel investments look weak, skew ROI calculations, and mislead decisions.

That’s where incrementality testing comes in. From simple holdout designs to sophisticated lift studies, it isolates the real impact of your advertising by comparing outcomes in exposed groups versus control groups that don’t see the campaign.

When you run these tests correctly, you can see how many incremental conversions, leads, or sales your efforts actually deliver versus what would have happened naturally. It’s a core part of a complete measurement framework alongside Marketing Mix Models (MMM) and multi-touch attribution.

In this guide, we’ll share:

- Why last-click attribution can mislead marketing decisions

- Signs your reporting is over-crediting the last click

- What incrementality testing actually means in marketing measurement

- Practical incrementality tests you can run today

- How to design reliable experiments and control groups

- How to interpret lift, accuracy, and real business impact

P.S. Not sure which campaigns truly drive incremental revenue and which ones just claim credit? At 9AM, we help brands design incrementality tests and analytics frameworks that show what your marketing actually causes

Why Is Last-Click Attribution Problematic?

For modern marketing teams, particularly those running multi-channel campaigns, last-click attribution may feel like a familiar friend. It’s simple, supported by tools like Google Analytics and Google Ads, and gives you a clear conversion number tied to a click.

But that simplicity comes at the cost of accuracy and true impact.

Consider a prospect first discovering a product on LinkedIn, then engaging with an email marketing campaign, and finally clicking on a Google PPC ad to buy. Last-click will assign all sales credit to that Google click, completely undervaluing earlier influences.

Here are the shortcomings of this approach summed up:

- Ignores the customer journey: Last-click doesn’t account for playbooks where multiple channels, like email, organic search, and paid social, work together over time to influence behavior.

- Overweights ‘closing’ channels: Channels closer to the purchase (e.g., branded search or retargeting) get disproportionate credit, even if they’re just harvesting demand created earlier by other efforts.

- Distances from real causal influence: Modern buyers interact across devices, apps, organic content, and offline touchpoints that don’t generate clicks yet still shape decisions. Click-based models ignore that influence.

Fortunately, most marketers realize the flaws, as the figure below from Emarketer shows (only 1 in 5 marketers are confident in last-click attribution).

This doesn’t mean last-click is useless. It’s clear and easy to track, and in short cycles, it can help identify immediate conversions.

But it’s incomplete and potentially misleading as the backbone of measurement, especially for companies trying to understand incremental impact and make data-driven decisions about marketing investments.

Signs You’re Over-Crediting Last Click

If your attribution foundation is built on last-click models, the default in many Google Analytics setups, you’re likely experiencing systematic mis-allocation of credit and budget.

This doesn’t just show up as numbers on a report: it shows up as poor decisions, ineffective investments, and stagnating growth.

There are the signs we’d look out for:

- Branded search driving “amazing” ROAS: Suppose your Google branded search campaigns appear to outperform every other channel. But total revenue isn’t growing. Your reporting isn’t revealing incremental impact, just attributing credit. This leads to budget increases for search at the expense of other activities that may be driving actual demand.

- Retargeting outperforms prospecting dramatically: Retargeting ads are a classic last-click “winner” because they catch users who were already warm and at the brink of conversion. If you’re seeing retargeting campaigns deliver much lower cost per conversion than prospecting, but scaling prospecting doesn’t increase audience inflow or pipeline, that’s another red flag.

- Performance collapses when ads pause (or doesn’t change much): If you pause a campaign and total conversions drop, that suggests the campaign was contributing incremental lifts. But if conversions stay the same, or drop only slightly, it means last-click was attributing credit to activity that wasn’t causally moving outcomes.

- Paid social appears weak in-platform but strong in blended revenue: Social media ads (Facebook, Instagram, LinkedIn, etc.) show modest in-platform conversion numbers, but when you look at blended revenue metrics across channels, you see outsized gains. This suggests these campaigns were driving influence earlier in the journey that last-click simply doesn’t capture.

Now that you understand what over-crediting last click looks like, let’s talk about what helps you find the real answers.

What Is Incrementality Testing?

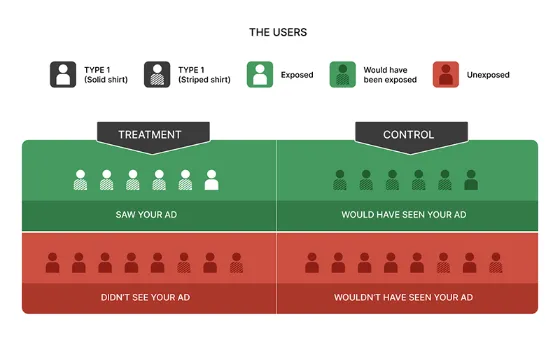

Incrementality testing is a controlled measurement method that tells you the actual causal impact of a marketing campaign, channel, or tactic on your business outcomes (like conversions, revenue, or leads). It works by comparing a treatment group that sees the marketing with a control group that does not.

This difference, called incremental lift, shows what would have happened without the campaign. That, in turn, helps you move past simple attribution credit and toward real performance insight.

It answers critical questions for growth teams, such as: “Did this ad campaign actually cause extra sales?” or “Would revenue have risen without this activity?”

This methodology can be applied across channels, from Google Ads and Facebook to email and PPC, using experiments (which we’ll cover shortly).

Benefits of Using Incrementality Testing in Marketing

Incrementality tests deliver actionable insights into what drives customer behavior and growth. Unlike last-click attribution, which assigns credit based solely on clicks, incrementality tests isolate the causal impact of individual channels and/or touchpoints.

One of the biggest benefits is improved budget allocation and ROI clarity.

Here’s a real-world example of that:

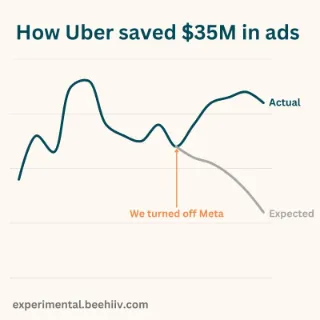

Uber used incrementality testing to address concerns that their heavy investment in performance marketing, specifically on Meta ads, was merely capturing organic growth rather than driving new users.

They conducted a three-month geo-based experiment across the U.S. and Canada and compared the results against a marketing mix model (MMM). Uber discovered that these ads were virtually non-incremental, contributing only a single-digit percentage to actual growth.

This data-driven insight allowed the company to confidently cut $35 million in ineffective spend and reallocate those resources toward higher-performing initiatives, such as driver acquisition and Uber Eats.

Another benefit is smarter strategic decisions.

Incrementality testing shows how different audience segments respond to your messages and whether campaigns are truly driving behavior change.

For example, controlled holdout tests can reveal that only a portion of leads from a paid campaign were incremental, while the rest would have converted through organic channels.

This clarity enables you to optimize your product, email, PPC, and social strategies on real impact.

Courtney Bittelari, Senior Director of Analytics at New Engen, puts it like this:

“If a test shows less impact than expected, it does not mean the channel is useless. It usually means the role of that channel is different than last-click or platform reporting suggests.”

In short, incrementality testing helps you:

- Cut wasted spend by eliminating non-incremental campaigns and reallocating funds where they drive true outcomes.

- Measure causal impact, not just correlation, improving decision accuracy at every step.

- Improve growth outcomes by focusing on what genuinely moves KPIs like revenue, leads, and conversions.

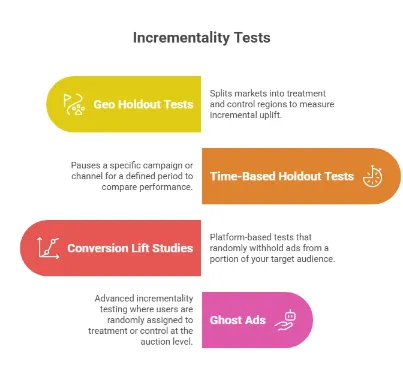

4 Practical Incrementality Tests You Can Run

Once you’ve recognized that last-click attribution is over-crediting certain channels and obscuring the true incremental lift of your marketing efforts, the next step is to run structured incrementality tests that isolate causal impact rather than just attributed conversions.

Below, we have shared four practical tests you can run, including foundational experiments any team can implement to more advanced designs that give you deeper insights into your channels’ effectiveness.

1. Geo Holdout Tests

A geo holdout test or geo-lift test splits markets into treatment and control regions. The treatment region continues to receive your campaign (e.g., search, PPC, Facebook), while the control region has the campaign paused.

Then you compare outcomes between these regions over time to measure the incremental uplift a campaign creates.

How it helps:

This test directly measures causal impact on KPIs like conversion, revenue, and leads by isolating the treatment from the rest of the market. It is mostly useful when channels like Google Ads or paid social appear to correlate with performance, but you need to know whether they actually cause incremental outcomes.

In our experience, this is where many teams go wrong. They assume strong correlations in dashboards automatically mean causation. Controlled experiments reveal whether the channel actually drives new revenue.

Example:

Let’s say a mid-sized DTC company runs search and social ads in four U.S. states (e.g., CA, TX, FL, NY). For a 30-day test, the brand pauses paid campaigns in two states (control) while continuing spend in the other two (treatment).

If total revenue per capita increases by 15% in treatment vs. control, that’s a clear signal of incremental impact.

When to use:

- You want to understand the impact of channel investments geographically

- You run activities that can be localized (search, social, display)

- You have enough traffic and budget across regions

Tools like Google Analytics, Looker/BigQuery, or incrementality platforms can help track and compare regional conversions and revenue over the test period.

Pro tip: Make sure control regions are similar in size and audience profile to treatment regions, and avoid overlapping media exposure that could contaminate results.

2. Time-Based Holdout Tests

A time-based holdout test measures incrementality by pausing a specific campaign or channel for a defined period (for example, two weeks). Then, comparing performance during the “off” window versus the “on” window (controlling for seasonality and other external factors).

Instead of splitting geography, you’re splitting time.

How it helps:

For many companies, this is the fastest way to measure true impact. It answers a brutally honest question: “If we stop this activity for a short period, does the business actually feel it?”

If revenue, conversions, or leads barely change, your last-click attribution model may be over-assigning credit to that channel. If performance drops meaningfully, you’ve uncovered measurable incremental lift.

Example:

Imagine you’re spending $60,000 per month on branded search. And you pause branded campaigns for 14 days.

What happens?

- If total daily revenue drops 3-5%, you’ve likely uncovered incremental value.

- If revenue stays flat and organic search absorbs most traffic, you’ve revealed a performance gap between reported conversions and actual incremental outcomes.

The key is comparing total business performance, not platform-reported conversions.

When to use:

- You cannot isolate by region

- You need fast answers within one month

- You suspect branded search, retargeting, or email is being over-credited

- Your marketing budget is under scrutiny, and you need defensible evidence

Critical guardrails:

- Avoid running during major promotions or holidays

- Keep other channels stable

- Run long enough to smooth daily volatility (minimum 2-4 weeks, depending on traffic volume)

- Track blended KPIs

3. Conversion Lift Studies (Platform-Based)

Conversion lift studies are built-in incrementality tests offered by platforms like Google and Facebook (Meta).

Instead of pausing activity manually, the platform randomly withholds ads from a portion of your target audience (control group) while continuing delivery to the exposed group (treatment). The platform then measures the difference in conversion rate between the two groups to estimate incremental lift.

This method is statistically rigorous because randomization controls for many external factors automatically. Also, it’s one of the cleanest ways to measure causal impact within a single channel.

From what we’ve seen working with performance teams, this is where many marketers start realizing how much last-click attribution can overstate results.

According to Meta, businesses that ran 15 conversion life experiments in a year saw 30% improvement in ad performance.

How it helps:

Platform lift studies help you:

- Measure incremental conversion and incremental revenue

- Validate whether an ad campaign is driving real business outcomes

- Compare incremental ROAS (iROAS) vs platform-reported ROAS

- Increase confidence in marketing ROI when reporting to leadership

This is particularly useful when paid social or search looks weak in platform dashboards but strong in blended results or when branded search appears unstoppable. You want defensible proof before scaling spend

Example:

Let’s say you’re spending $100,000/month on paid social. Platform-reported data shows a 4.0 ROAS and 2,000 attributed conversions.

You run a conversion lift study on the platform, and the results show a 12% incremental lift, but only 800 conversions were incremental.

That means 60% of the attributed conversions would have happened anyway.

Your new incremental ROAS calculation would be:

Incremental Revenue ÷ Spend(800 × AOV) ÷ $100,000

That number (not the reported 4.0 ROAS) reflects the true impact of your campaign.

Minimum requirements and considerations:

- Platforms may require a minimum spend (varies by region and advertiser size)

- You need enough audience size and traffic volume

- Tests typically run 2-4 weeks

- Results are reported with statistical confidence intervals

When to use:

It is useful when you need faster answers than a full geo experiment, or are focusing on a single channel.

4. Ghost Ads (Auction-Based Testing)

Ghost Ads (also called auction-based randomized testing) are an advanced form of incrementality testing where users are randomly assigned to treatment or control at the auction level, before an ad is even served.

Here’s how it works in simple terms:

- When a user enters an ad auction, the system randomly assigns them to:

- Treatment → eligible to see your ad

- Control → withheld from seeing your ad

- The platform tracks conversions for both groups.

- The difference in outcomes measures the causal impact of the campaign.

Unlike basic holdouts, ghost ads preserve auction dynamics, so your campaign delivery, bids, and costs remain realistic. This makes the measurement more precise and scalable.

How it helps:

Ghost Ads solve a major limitation of simpler tests:

- They prevent budget distortion that happens when you manually pause campaigns.

- They maintain real bidding pressure and competition.

- They eliminate selection bias common in retargeting and bottom-funnel segments.

For large advertisers running high-spend search or programmatic campaigns, this method produces some of the most statistically reliable incrementality insights available.

Example:

Imagine your PPC campaign reports a 3.8 ROAS with $500,000 in attributed revenue and $130,000 in spend. Using Ghost Ads methodology, you discover that the control group's conversion rate is 4.2%, while the treatment group's is 4.8%.

Absolute lift: 0.6 percentage pointsRelative lift: around 14%

If only 14% of the attributed revenue is incremental, incremental revenue is roughly $70,000, and incremental ROAS is 0.54.

That’s a dramatic difference between attribution and true incremental performance.

When to Use:

This approach is best suited when:

- You have significant scale (high traffic, large audience sizes)

- You need precise measurement accuracy

- You’re running complex, multi-market campaigns

- You want reliable insights for executive-level reporting

- You operate within platforms that support auction-level experimentation

Tips on Designing a Clean Incrementality Test

At 9AM, we’re constantly designing incrementality tests alongside attribution to get a clear picture across channels.

Here’s what we recommend:

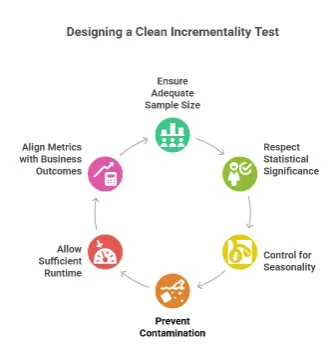

- Ensure adequate sample size: Incremental lift in marketing is typically small, which means underpowered tests frequently produce misleading or inconclusive results. Before launching, estimate the required audience and conversion volume to reach your preferred confidence levels and avoid wasting marketing budget on noisy outcomes.

- Respect statistical significance: Not every observed difference represents real causal impact. Therefore, always review confidence intervals and avoid acting on results that cross zero or relying on directional trends without statistical backing.

- Control for seasonality and external factors: Promotions, holidays, product launches, or demand spikes can distort test results. Run control and treatment groups simultaneously where possible, and avoid periods with unusual business volatility.

- Prevent contamination across segments or markets: If your control group is indirectly exposed to marketing (through national campaigns, organic spillover, or overlapping audiences), lift will be diluted. Carefully separate audience segments or regions and monitor for unintended cross-exposure.

- Allow sufficient runtime: Don’t stop tests early, as that increases the risk of false conclusions. Run experiments long enough to capture a full purchase cycle and smooth daily volatility (ideally at least 2-4 weeks for most digital campaigns).

- Align metrics with business outcomes: Track blended revenue, incremental profit, and true business impact rather than relying solely on platform-reported conversions. Remember, the goal of incrementality testing is to inform smarter growth decisions.

How to Interpret Results from Incrementality Tests

Note that running incrementality tests is only half the battle. The real leverage comes from correctly interpreting the results and translating them into smarter decisions, faster action, and better capital allocation across your marketing campaigns.

From what we’ve observed across different marketing teams, this is where the confusion begins. Many teams run experiments correctly, but the interpretation step ends up oversimplified or misread.

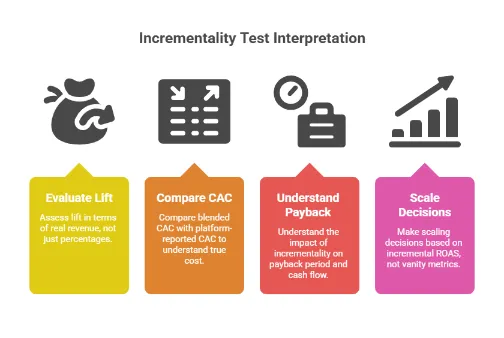

1. Evaluate Lift in Terms of Real Revenue

Most tests report absolute lift (e.g., +0.8 percentage points), relative lift (e.g., +16%), incremental conversions, and confidence intervals.

Here’s where marketers get tripped up. A “small” lift can still mean meaningful incremental revenue.

For example, you have:

- Control conversion rate: 5.0%

- Treatment conversion rate: 5.8%

- Absolute lift: +0.8 pp

- Relative lift: +16%

If your campaign reached 100,000 users:

Incremental conversions = 0.8% × 100,000 users = 800

At $150 AOV → $120,000 incremental revenue

This means the campaign generated 800 conversions that likely would not have happened without the marketing exposure.

In practice, we mostly see teams underestimate results like this because the percentage lift appears modest at first glance. However, the financial impact can be substantial.

This may look modest in percentage terms, but financially it could justify or invalidate a large marketing budget.

2. Compare Blended CAC with Platform-Reported CAC

Last-click dashboards show you platform CAC. Incrementality reveals blended CAC.

For example, your Meta dashboard may report a $40 CAC for 2,000 conversions. And then your incrementality test shows only 50% of conversions are incremental. What does that mean?

- True incremental conversions = 1,000

- True incremental CAC = $80

This gap is why incrementality belongs inside a broader measurement ecosystem. If you don’t adjust CAC for incrementality, you risk scaling campaigns that look efficient but don’t increase total sales.

3. Understand the Payback Period Impact

Incrementality doesn’t just change CAC. In fact, it changes payback models. If incremental CAC doubles after testing, your payback window might extend from, say, three months to six months. This affects cash flow, inventory decisions, and even scaling strategy.

And your finance team cares about this far more than reported ROAS. When interpreting results, always recalculate:

Incremental ROAS = Incremental Revenue/Spend

And then compare that to your required payback threshold.

4. Scale Decisions Based on Incremental ROAS

The most important interpretation question is: Should we scale, hold, or cut?

Here’s what we recommend. If incremental ROAS is:

- Well above target → Scale confidently

- Slightly above break-even → Optimize before scaling

- At or below break-even → Reduce or reallocate

This is how you move from vanity reporting to disciplined capital deployment.

Measure True Marketing Impact with 9AM

If you’ve made it this far, one thing should be clear. Last-click attribution is simple to implement, but it rarely reflects the real drivers of growth. Incrementality testing gives you a much clearer picture of what your marketing actually causes.

Modern marketing teams don’t need more dashboards. They need a measurement framework that connects marketing activity to revenue and better investment decisions.

That’s where working with a specialist agency like 9AM becomes a strategic advantage.

Most agencies optimize inside platforms. We create systems across the broader measurement ecosystem and then buy digital media (social, programmatic, and/or connected TV) based on findings.

Instead of relying on platform-reported conversions, we can help you design and launch clean incrementality tests, calculate incremental profit and blended CAC, and align marketing KPIs with financial outcomes.

Book a strategy call now to evaluate your current measurement setup and identify where incremental growth may be hiding!

FAQs

When is last click attribution valuable?

Last-click attribution can be useful for short-term optimization and for understanding which touchpoints correlate with conversions in real time, especially in high-volume channels like PPC or email campaigns, where immediate actions matter.

What is the difference between AB testing and incrementality testing?

A/B testing traditionally compares creative variants to see which performs better. Incrementality testing, on the other hand, answers a broader causal question: “Did running these ads create additional conversions or revenue that wouldn’t have happened anyway?”

Is incrementality testing only for large brands?

No. While some advanced designs, like geo experiments or auction-level ghost ads, require higher traffic and larger marketing budgets, basic incrementality tests (time-based holdouts, platform conversion lift studies from Google or Meta) can be run by mid-market teams, too.

How long should an incrementality test run?

Well-designed incrementality tests should run long enough to capture normal variability in traffic and conversions. For many digital channels, that’s a minimum of 2-4 weeks.

How does incrementality testing differ from attribution?

Attribution models like last click analyze the sequence of touchpoints that led to a conversion and assign credit accordingly. Incrementality testing, on the other hand, directly measures causal impact by comparing outcomes between controlled groups (exposed vs. unexposed).

Which channels benefit the most from incrementality tests?

All channels can benefit, but incrementality is especially valuable with paid social, search campaigns, and retargeting.

Can 9AM handle incrementality testing?

Yes. At 9AM, we design and run incrementality tests as part of our broader analytics and measurement services. This helps brands understand the true impact of their marketing, improve ROAS, and align media investment with both short- and long-term growth goals.

Does 9AM help brands move beyond last-click attribution?

Yes. At 9AM, we help you move beyond last-click reporting by building measurement frameworks that include incrementality testing, advanced attribution, and unified analytics dashboards. This allows you to understand which channels actually drive incremental growth.

Appendix

- https://marketingltb.com/blog/statistics/marketing-attribution-statistics/

- https://www.emarketer.com/content/just-1-5-marketers-confident-last-click-attribution

- https://funnel.io/blog/incrementality-testing

- https://www.linkedin.com/posts/sswamina3_we-turned-off-ads-on-meta-saw-no-business-activity-7289318684186030082-kyhX/

- https://www.facebook.com/business/measurement/conversion-lift

- https://www.softcrylic.com/blogs/incrementality-measurement-ghost-ads/